OpenStack with vSphere and NSX

Notice: This article is more than 12 years old. It might be outdated.

In a previous post I have already written about Physical networks for VMware NSX. Now it’s time to put everything together and showcase you how VMware vSphere, VMware NSX and OpenStack come together for a cloud with network virtualization via overlay networks.

As this includes quite a few steps, I’ll split the posts into a series with this one serving as the introduction.

Goal

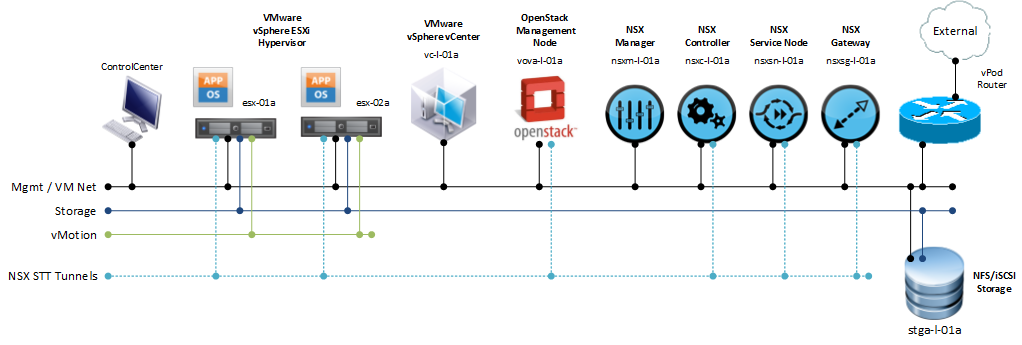

The goal of this series will be to deploy an OpenStack cloud that leverages VMware vSphere - along with its well-known enterprise-class benefits such as VMotion - as the underlying Hypervisor. In addition, network virtualization within OpenStack will be provided via VMware NSX as a Neutron plugin. This allows the creation of virtual networks within OpenStack that consist of L2 segments and can be interconnected via L3 to each other or the outside world (See Figure 1).

In summary we will end up with:

- VMware vSphere 5.5 cluster serving as Hypervisors for our cloud. Well-known features such as VMotion, HA or DRS will still be usable.

- VMware NSX for network virtualization, allowing us to create multiple isolated L2 segments per tenant and providing the ability to interconnect them between each other and with the outside world via L3 services.

- OpenStack as the Cloud Management System (CMS) providing users a well-known interface via a web-based GUI and easy-to-use API as the frontend of our cloud.

Benefits

One might wonder why to choose VMware vSphere as the Hypervisor of choice for such a setup and not use e.g. KVM instead. Two main reasons come to mind, why the presented architecture is a viable solution:

- Usage of Enterprise-class features

Using VMware vSphere with OpenStack will present the entire cluster as a single “node” to OpenStack, allowing Administrators to rely on well-known enterprise class features of the VMware vSphere Hypervisor. This includes e.g. Dynamic Resource Scheduling (DRS) to better distribute the workload across Hypervisors, VMotion to free up a Hypervisor in order to perform preventive maintenance or High Availability (HA) to restart workloads in case of hardware failures.

The predominant model for cloud computing assumes that all components can fail at any time. Thus the application within the workloads need to ensure redundancy. Using VMware vSphere as the Hypervisor of choice with OpenStack, one can deviate from this model and offer a highly reliable cloud instead, known from managed service provider offerings using virtualization today. But it’s also possible to create a hybrid approach, offering both a pure cloud experience as well as a highly available experience within the same cloud. - Ease of deploying VMware vSphere vs Openstack with KVM

Deploying OpenStack with KVM is not easy. Instead it is quite a challenging task, which is why various companies - such as e.g. Mirantis - try to fill this void and offer deployment services or products for OpenStack installation. Deploying a VMware vSphere cluster on the other hand is pretty simple and there are numerous books, hands-on labs or other forms of documentation out there to help. Thus using VMware vSphere as your Hypervisor of choice greatly simplifies the deployment of OpenStack.

We will later also see vSphere OpenStack Virtual Appliance (VOVA). VOVA is an appliance that was built to simplify OpenStack deployment into a VMware vSphere environment for test, proof-of-concept and education purposes. VOVA runs all of the required OpenStack services (Nova, Glance, Cinder, Neutron, Keystone, and Horizon) in a single Ubuntu Linux appliance.

Setup

Please remember that this setup is for test, proof-of-concept and education purposes only. Do not use this in production and do not use any production element in it.

For this setup we will assume the following prerequisites are already in place:

- VMware vSphere cluster

- Version 5.5 or higher.

- vCenter can either be on Windows or as VMware vCenter Server Appliance (VCSA). I will use VCSA.

- At least one free vmnic for binding the NSX vSwitch

- A single “Datacenter” should be configured in vCenter (This is a temporary limitation as safety precaution).

- DRS should enabled with “Fully automated” placement turned on.

- The cluster should have only Datastores that are shared among all hosts in the cluster. It is recommended to use a single shared datastore for the cluster.

As part of this walk-through series, we will add the following components:

- VMware NSX cluster

- A single NSX Controller. Note that VMware NSX requires three or five NSX controller deployed as a cluster on physical hardware in a production environment. As this setup is for test, proof-of-concept and education purposes only, it is sufficient to deploy a single controller inside a VM.

- A NSX Manager instance inside a VM.

- A NSX service node instance inside a VM.

- A NSX gateway instance inside a VM.

- vSphere OpenStack Virtual Appliance (VOVA)

- A single instance of the vSphere OpenStack Virtual Appliance (VOVA).

The resulting setup will look like Figure 2.

In this setup we will use a very simple physical network setup. All components will attach to a common Mgmt / VM Network. Only Storage (iSCSI/NFS) and vMotion will use dedicated isolated networks (e.g. VLAN) according to VMware vSphere best practices. As indicated in Figure 2, the Hypervisors as well as the NSX Controller, NSX Gateway and NSX Service Node will form an overlay network via STT tunnels. Please do not use such a simple network setup, sharing management and tenant traffic on the same network segment, in a production environment!

Steps

The required installation and configuration steps include:

- Install and configure the VMware NSX appliances

- Create and configure the VMware NSX cluster

- Install and configure the Open vSwitch inside the ESXi hosts

- Import and configure the VMware vSphere OpenStack Virtual Appliance (VOVA)

- Create virtual networks and launch a VM instance in OpenStack

- Configure the VMware vCenter Plugin for Openstack and look behind the scenes of OpenStack on vSphere

Leave a comment